In the field of IS and management we often put forward a certain conception of the organisation, the social. In contemporary business consulting and management academia, the organisation is often conceptualised as a hierarchical open system with a certain body of knowledge supplying the management system with rational decision making. Other alternative, academic approaches are influenced by literature studies and Gadamer’s hermeneutics (Gadamer, 1975), promoting the need for understanding and context, the particular, rather than the universal and manageable. Emerging from these two spectra, each fighting for their own conception of subjectivity and objectivity, Anglo-Saxon and continental philosophy, each defining the criteria for truth and meaning, one uncovers systems theory and cybernetics which proposes a model for generalising structures and properties between different phenomena in the world: Bertalanffy (Bertalanffy, 1969) suggests a unified cybernetic model for living, mechanic, and social systems, whereas von Foerster (Von Foerster, 2003) and Luhmann (Luhmann, 1995) suggest a second order model based on constructed observation and interpretation. In organisation studies, first and second order systems theory each postulate their own conception or construction of social reality: Parsons defines the social as actions or events referring to each other within a structural organising of social functions, whereas Luhmann flips the tin can with a functional organisation of social structures based on communication, reproducing and sustaining itself through Maturana and Varela’s concept of autopoiesis (Maturana & Varela, 1980).

Amidst IS management’s–and thus EA’s–attempt to establish a common, trans-disciplinary foundation for research, there appears to be an ontological schism of what the social is and organisations really are. Is it a collective intelligence or logic of rational decision making? Is it a reactive, intersubjective collective attempting to make sense of the world in hindsight through history and culture? Or is it a system or a construction of a system that organises, structures, or communicates through constant adaption and recursive reproduction only by reference to its own recursion and reproductivity? The latter approach dissolves the former two boundaries by creating a boundary of distinction even more important than the understanding subject itself at the edge of every possible system. It is the distinction between system and environment that generates or fabricates meaning and truth, but it comes at the cost of reducing our very own processes of cognition and sensemaking to a set of vibrating antennas or satellites mounted at the fragile surface of every human system.

An ontology of the social is thus far from complete. Enterprise Architecture (EA) seeks to address this by building layers of abstraction and control, thereby assuming that static systems models of socio-technical relations yield manageability and transparency. Accountability is achieved by linking formal role descriptions to process models and system landscapes, often positioned in a well-defined hierarchy and stored in a database repository for later reference and reuse. In order to reuse ‘best practices’ and assure a certain level of maturity in framework and methodology, enterprises often implement their architecture practice against existing reference frameworks and enterprise meta models. Frameworks such as FEAF even include a CMMI-like maturity model for EA, which assesses the success of architecture program by measures such as completeness and integration. OMB, the US Federal Office of Management and Budget, has furthermore published a set of measures of architectural completeness for evaluating US Federal Agencies. The highest achievement, level 5, is the architectural utopia in which the organisation practicing EA corrects its own business failures by architectural inspection. Architecture is here synonymous with optimising an organisation.

Given the above reflections on what the social really is, is it really philosophically reasonable to suggest that a stable, decomposable, hierarchical model, which most enterprise meta-models really are, is capable of building a comprehensive model of the social? Is it really meaningful to stretch virtually any organisation, be it government or private, along a five-level diagram and measure it by how well-described architectural elements are? And what happens when the Federal agency hits the ceiling after 5? Those are clever and important questions that information and organisation science ought to ask. Unfortunately, that is seldom the case. Maturity models, in the classic form of a five-step ladder, are an inherent part of any contemporary management/IS theory: process maturity, architecture maturity, service maturity, integration maturity. The five-step Capability Maturity Model (Paulk, 1995) has its roots in systems engineering carried out by engineers building space shuttles for NASA. As universal as it may be, the problems, issues, and solutions faced by modern organisations are far more muddy, messy, an ill-defined than those originally faced by defence contractors and DoD bureaucrats. Such fast-paced, deep problems are also characterised as wicked problems (Rittel & Webber, 1973):

- Wicked problems have no definitive formulation. One can infer that the problem exists, but will never be able to fully document the problem.

- The solution to a wicked problem is “good or bad” not “true or false”.

- Every possible solution is a one-shut operation as every solution attempt will leave a trace which cannot be undone.

- Each wicked problem is unique and may eventually be the symptom of another, underlying wicked problem.

Through my previous research, I have suggested a systems theoretical approach towards understanding and explaining EA. Systems theory is helpful towards describing the messy complexity of social and communicative structures. Second order systems theory adds a rich, dynamic theory for understanding communication in- and outside organisation by describing the exchange of utterances between human actors in search of meaning (Jensen, 2010). I believe, however, that these two key conceptions of enterprise planning and governance can furthermore be extended into a general theory of EA by including Deleuze’s theory of the rhizome.

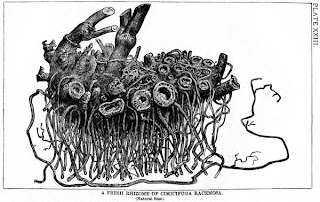

Deleuze (Deleuze & Guattari, 1988) describes the rhizome structure (Deleuze & Guattari, 1976) as a meaningful alternative to uncovering complex structures, be they social or biological. Western society, Deleuze explains, has built its historicity and philosophy on the basis of binary structures: true-false, yes-no, top-bottom, maturity-immaturity. Contemporary EA frameworks are, in fact, highly binary: layers separated by clear boundaries, processes with a start and end, structured organisation charts and capability maps with a top and bottom. The rhizome is a viable alternative since it assumes an inherent complexity of what it is intended to describe. The rhizome is constantly transforming and morphing itself, making it virtually impossible to map out its structure completely at any point in time. This is exactly how wicked problems occur. Wicked, messy problems could, in fact, be described as rhizomatic structures. The rhizome structure applies well to the socio-technical nature of organisations as well, as the dissipative relationships between humans, technology, and organisation structures form a complex, dynamic, and transforming entity with no clear, formal, or necessarily logical order. This rhizomatic relationship is probably best explained in the field of technology adoption and diffusion in private enterprises where traditional positivist approaches to management and innovation struggle to explain how and why technology trends emerge and behave. This reflection on Deleuze leads to the following important claim:

Organisations are complex, dissipative structures constantly transforming complex, human knowledge and social relationships. A rhizomatic systems model satisfies such conception of organisational reality. Hence, Enterprise Architecture, in its search for whole-of-enterprise views, should adopt rhizomatic theory for uncovering and understanding the true messiness of organisations as socio-political habitats.

Understanding Enterprise Architecture as a rhizomatic systems practice, however, must come at the cost of killing certain darlings. The first darling is the idea of organisations as stable structures operating on explicit, verifiable knowledge, which in turn can be divided into clear architectural layers and segments. The second darling is the conception of a universal maturity model explaining the natural progression towards “EA nirvana”. There is no such one.

- Layers, segments, and hierarchical models depart from a Westernised, binary view of the world. Layering suggests decomposability and abstraction of organisational complexity. A rhizome does not have such properties. The messy, social facets of organisational life cannot be decomposed or functionally abstracted. The social does not have a single function and thus cannot be functional. Wicked problems, as they emerge from social interactions and organisational problems, are rhizomatic and cannot be explained fully through rationalist models.

- Maturity models are inherently binary. They suggest a natural progression towards the optimal stage 5 somewhat similar to a tree as it stretches its branches towards the rising sun. The rhizome is the exact opposite of a tree structure as its roots and shoots grow and form in any direction, shrouding and shifting its original structure. Social structures, apart from the general statistical patterns uncovered by social psychology, do not follow universal laws of transformation or branching—and hence it is impossible and meaningless to suggest a generic, universalistic maturity model of social behaviour in EA adoption and planning. There is no such nirvana of Enterprise Architecture—and if there ever were, it would be constantly shifting and transforming depending on the current managerial climate, problems of planning, and struggle for control inside the organisation. Exactly this relationship of management, planning, and control is rhizomatic as well.

For Enterprise Architecture to fully explain these sacrifices, it must adopt a view of the enterprise as a non-linear, interconnected multiplicity, for which structures can only be meaningfully traced and described in hindsight. Traces always remain interpretations. Enterprise modelling involves tracing organisation structures, but as these structures are traced and interpreted, they suddenly shift and transform into a different multiplicity. Enterprise Architecture is thus a semiotic practice of tracing and interpreting organisations as complex signs. The output, the long-term plans, roadmaps, and meta-models, are merely simplified pictures of these dissipative signs. Only by accepting these aspects of enterprise reality, can Enterprise Architecture truly characterise the challenges and solutions in strategic planning and enterprise management.

References:

Bertalanffy, L. v. (1969). General System Theory; Foundations, Development, Applications. New York,: G. Braziller.

Deleuze, G., & Guattari, F. (1976). Rhizome : Introduction. Paris: Éditions de Minuit.

Deleuze, G., & Guattari, F. (1988). A Thousand Plateaus : Capitalism and Schizophrenia. London: Athlone Press.

Gadamer, H.-G. (1975). Truth and method. London: Sheed & Ward.

Jensen, A. O. (2010) Government Enterprise Architecture Adoption: A Systemic-Discursive Critique and Reconceptualisation. Copenhagen Business School.

Luhmann, N. (1995). Social Systems. Stanford, Calif.: Stanford University Press.

Maturana, H. R., & Varela, F. J. (1980). Autopoiesis and Cognition : the Realization of the Living. Dordrecht, Holland ; Boston: D. Reidel Pub. Co.

Paulk, M. C. (1995). The Capability Maturity Model : Guidelines for Improving the Software Process. Reading, Mass.: Addison-Wesley Pub. Co.

Rittel, H. & Webber, M. (1973), `Dilemmas in a General Theory of Planning’, Policy Sciences 4.

Von Foerster, H. (2003). Understanding Understanding : Essays on Cybernetics and Cognition. New York: Springer.