If you are not a practicing Enterprise Architect, words such as COBIT, TOGAF, ITIL and ZACHMAN will either mean nothing to you or will more often than not confuse you. Most IT professionals will relate these terms with concepts such as architecture framework, technology framework, standards, modelling, analysis etc. which may or may not correct depending on referring context. However, thanks to greater awareness of Enterprise Architecture in the last decade or so, it should still be easy for keen Enterprise Architecture enthusiast to find out more about the above and other similar Enterprise Frameworks. Most of above listed frameworks are available free to download for limited-time review or even free to practice if you are undertaking non-commercial internal enterprise purposes (see useful links and references below). The real question however which seldom gets asked it how do these frameworks relate with each other, if at all? How can they interact and collaborate with each other? What are considerations of such engagement across frameworks? And more importantly is it worth it from business value and relevance perspective? Such questions would ideally demand a decent whitepaper which analyses such interactions. Given time constraints however, I am trying to present my thoughts in this blog post as an executive summary.

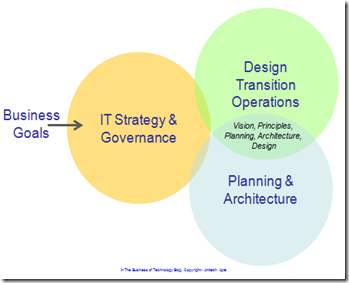

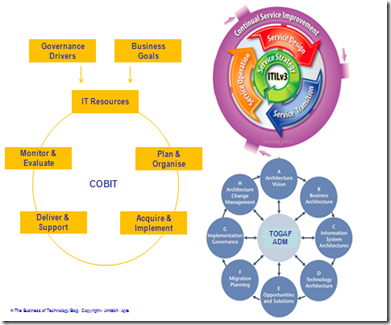

Before discussing a few frameworks and their potential linkage with each other, I would like to present business and IT context of such interaction. Based on my practical experience, I would propose a simple map as presented in below figure. Business goals and objectives demand strategic IT response in terms of strategic and tactical IT programs, investments and activities. They need to be governed to ensure compliance of deliverables with the business objectives. Strategy needs planning and architecture disciplines to ensure that strategic intents are given shape of tangible constructs. This is where enterprise architects convert abstract into specific plans and architectures. This is where artefacts such as business architecture, application architecture, infrastructure architecture get conceived. Such plans and architectures need to be further developed in detailed designs, transition plans and activities. More importantly, resultant IT systems and solutions are required to be operational ready and feasible. I am aware that this presents an overly simplistic picture of often much complex and complicated technology implementation reality. But the purpose here is to give a broad and high-level overview of chain of actions which need to take place in the journey of business goals to business processes, applications, solutions to their eventual technology implementations and operations.

|

| Why and Where do Framework Matter? |

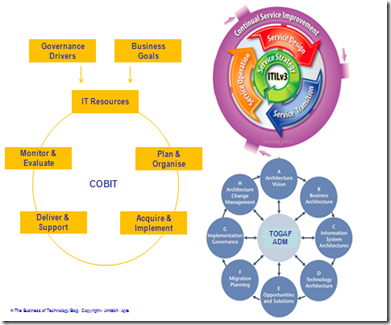

Going back to set of initial questions which I raised in this post earlier, let us now tray and map a few leading frameworks to the above outlined concept and journey. I have picked up three leading frameworks for this purpose; COBIT, TOGAF and ITIL. COBIT framework in this map provides the overarching Strategy and Governance mechanism. It takes business goals and governance drivers as inputs and then provides a seamless mechanism to link IT Resources with planning, implementation, delivery and monitoring of delivered systems. I would like to propose that, COBIT however needs a more thorough framework such as TOGAF to further elaborate and develop the Planning and Architecture activities in the journey. TOGAF ADM provides a very good and comprehensive process discipline to take requirements through various steps such as vision, architecture, solution definition, planning and change management. At this point however, I would like to suggest that, to take the architecture to the next level of detailed design and transition planning, a framework such as ITIL will be extremely useful. ITIL takes a service view of the world in definition of systems and not a mere technology view. ITIL practitioners will put operability ahead of technology or architecture purity, and rightfully so. ITIL sees through the design through transition and operations of the service.

|

| COBIT TOGAF and ITIL in Prefect Harmony |

I have to clarify that, above is simply one way of arranging these very useful frameworks to work with each other. It can be argued that, TOGAF in certain instances can provide overarching umbrella for such journey from requirements to delivery. Or indeed ITIL on it’s own can be adequate to see the system design through to implementation. There is no right or wrong with Enterprise Architecture and that is the strength and weakness of the practice I would like to argue. The purpose of this post as I stated earlier was simply to showcase benefits and effectiveness of such Enterprise Frameworks work together in perfect harmony!

Now to the real question….what is the business benefit of this? Is it worth the investment and hassle? The answer is that it depends….depends on the business context. It may be worth the more complicated, complex and distributed your business requirements and resultant technology response. It may be an overkill if your requirements and responsive systems are not so complicated. In the long run however, Enterprise Architecture is about entire business and technology estate and not just one program or project and hence often you will find that medium to large size organisations will use more than one framework. In most cases, such framework do not interact well…and this is where my draft proposal above may be useful.

References and relevant links for further reading..

- TOGAF – The Open Group Architecture Framework

- ITIL – Information Technology Information Library

- COBIT – Control Objectives for Information and related Technology

- ZACHMAN – named after inventor John Zachman