The Problem

According to United States records, from 2006 cyber attacks to 2016, (crimes, intelligence gathering, and warfare) have gone up 1300 percent. Other reports identified in Forbes Magazine indicate that between 2015 and 2016 there was a 200 to 450 percent increase in attacks. I suspect that though the numbers vastly underestimate the total number of attacks. I know that in the late 1980s, one company was averaging 10,000 attacks per day on its website and access points to the internet; of which 4000 originated in Russia (then the USSR), China, North Korea, and the like

.

There are two goals for attacks, to disrupt the entire IT infrastructure or to gather or change protected data for various nefarious purposes. There is a multiplicity of reasons for these attack, monetary gain, political change, and so on; the “so on” is too long to enumerate.

The cost for preventing and mitigating the effects of these attacks has spawned a new multi-billion dollar industry. Consequently, the need is for an entirely new system (network and datastore) that completely defeats all attack vectors. That is what I’m proposing here.

The Solution A Disruptive Architecture: The Once and Future System

The Goal

The goal of the architecture presented here is to define a highly secure system for the transmission and storage of data.

The architecture is for a fundamentally different “new” network and datastore. I put “new” in quotes because I based the architecture on a number of concepts and standards from the late 1970s to the mid-1990s. For reasons of economies and business politics these concepts and standards were abandoned. When I submitted the architecture for a patent and even though the architecture uses old concepts and standard in a new way, I was told that since it was based on well known concepts and standards the architecture it is unpatentable.

Consequently, I’m presenting it in this post in the hope that someone will take serious look at it and communicate with me so that I can present the details and we can build a secure network and datastore.

The Architecture

My fundamental idea is to create a separate “data only” network and datastore. While initially, having a worldwide network for the storage and transmission of data separate from the Internet “of everything” may seem as a ludicrous idea for those looking at the “short-term” costs for an organization; what the cost of having data stolen, corrupted, or destroyed would be for an organization? And remember that there are initial and recurring costs for data security on a cloud or across the internet.

This new architecture has five components. One of them has evolved over the past twenty years. One of them was declared obsolete thirty years ago. One of them is based on petrified standards of the 1980s. And one uses a new twist on current hardware and software. The fifth is a particular form of governance.

New User Interface Security

The base technology of the new user interface has been evolving over the past twenty years at least. It is a combination of three functional technologies. The first is biometric recognition. Any secure system requires some form of authentication; that you are who you say you are. Various forms of biometric authentication, facial recognition, fingerprint identification, retinal pattern recognition, and so on, are currently the least likely forms of identification to be broken by cyber attacks.

The second security technology is a version of the smartcard. These are credit-card-like with a data storage computer chip embedded. Under this new function the card reader would communicate the location, time of day, and date, whereupon the card would generate a pass code based on those parameters.

At the same time the reader would generate a pass code also based on those parameters. The system would accept the identification if and only if they matched. Since any secure system requires at least to factor authentication, a user would need both the smart card (which additionally could store the biometric data) and their own body.

Finally, authorization and access control are both static for a given user interface to the system. This means that the user of a given device (be it a terminal, PC, smart phone, etc.) can only gain access to the set of data, records, or summaries to which their entitled.

So a contract specialist has no access to engineering data for the contract or only a limited set. If the contract specialist attempted to sign on to another device, to which he was not preapproved, he could not get to the data to which he is entitled. The reason is that an individual must be preapproved of every terminal the individual wants to use.

Or a doctor may not see a patient’s complete medical history without the patient’s permission. This would be a two step process. The doctor would have to sign in on his or her device using the two-factor authentication, described above. Then the patient would have to sign on to the same device using the same two-factor authentication to give the doctor permission to access his or her medical record.

The security meta-data and parameters are stored on the ultra-secure data network (USDN). Any updates or changes must be made and approved through the system’s security governance function. No dynamic changes can be made until the changes are approved. In a political/cultural context, this governance process will be the most difficult to secure since users expect changes to be made “NOW” and the process doesn’t allow “NOW” to happen.

The Bridge

The second architectural component is the bridge from the Internet to the USDN. This is really the key component securing the USDN from attacks. And this is the component that was declared obsolete thirty years ago. In the early 1980s there were many proprietary data networks. To communicate data from one network to another required a network bridge.

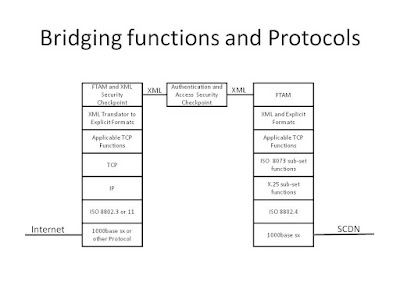

The following diagram is from the patent that I applied for. It shows an example of how changing the protocol layers or stacks creates a portcullis in the bridge that provides the ultra-security. On the left side of the bridge are the standard Internet protocols. Other than the top layer (called the Application Layer in the OSI model) and the bottom layer (the Physical Layer in the OSI model), all layers link and guide the communications between the sender and receiver.

Notice that the functional protocols on each side of the bridge, with the exception of the physical layer are different. On the left side all protocols are current Internet standards. However, on the right side the bridge uses protocols from the Open Systems Interconnect (OSI) suite. These protocols were abandoned in the 1990s in favor of the earlier TCP/IP suite, that at the time were less expensive and much less capable.

[Sidebar: “The first example of a superior principle is always less capable than a mature example of an inferior principle”].

What this means is that the entire USDN will use these OSI protocols. Any cyber attack software developed for Internet protocols would have to be redesigned for the OSI protocols.

Even if the hackers of whatever stripe did develop software capable of exploiting vulnerabilities in the OSI protocol stack they would still need to get it onto the network. But the design of the bridge includes a portcullis in the middle of the bridge.

This portcullis is designed to allow only data and records in well defined formats to pass. This means that no documents can move across the bridge. In this case “documents” includes e-mail, documents, unformatted text, files, or other unformatted data.

This stringent requirement eliminates nearly every attack vector by hackers. For example, there is no way that a Trojan horse attachment can get into the system because e-mail, let along e-mail with attachments, is allowed access across the bridge.

As shown in the diagram, only data in specific and static XML formats is allowed to move through the portcullis. The XML data structures are installed in the portcullis only after approval using one of the governance processes.

So, for example, medical data would use an XML version of the international medical standards, engineering data would use an XML version of STEP, and so on. Only data exactly following those standards to which the user is entitled would get through the portcullis.. This would initially have a very large overburden in meta-security and access control data about all individuals.

The Network

The third architectural component is the network. The network is based on petrified standards of the 1980s. Inside the portcullis-bridge data would be free to move among the various nodes of the network using the same OSI protocol stack that is used on the right side of the portcullis-bridge shown in the diagram.

Additionally, it would use improved versions of the Directory Service (X.500) standard. This would include using static routing meta-data (which many network analysts would say is not an improvement). However, static routing meta-data means that if an unauthorized node magically appeared on the USDN (because some hacker tapped one of the USDN lines) the node would be recognized as a threat immediately. Consequently, any attempt to breach the security imposed by the portcullis-bridge by directly attacking the network would fail, as long as good governance is in place.

Datastores

The last technical function is data storage. This datastore function uses a new twist on current hardware and software design for the storage of data and information. The twist is that only specific data and records are store, not files from outside the network.

An organization using an USDN-like system would have its data file structures created by authorized personnel inside the USDN. These file structures would follow the various authorized XML data structures. No freeform data like e-mail or documents would be allowed. [Sidebar: remember its much much simpler to create documents from data than to glean data from documents.]

The only applications that are authorized to run on the USDN and its datastore computers are those that create, read, update, or delete records or data elements. Reading data would include reading for transfer, and for summarization.

For example, suppose the medical profession of a state or of the United States adopts the USDN to protect patients’ medical records. A medical researcher may be granted access to summaries of certain data elements of patients’ record that have a particular medical problem. This access would be granted through an approval process—part of governance—prior to obtaining the summaries.

The advantage is that the medical researcher has access to a complete set of data for the population of an area. The downside for the researcher is that they need to have a well formulated and defensible hypothesis to work from, in order to obtain the data, and that the governance processes take time.

Governance

The Governance processes function of the system’s architecture is most critically important of the five functions because it is the only one where humans are involved—Big Time. As discussed above there are many security functions that are static and require administrative functions to change the parameters and meta-data. While I expect that actually changing the meta-data and parameters will be automated, the various decision making processes will not.

One obvious example is in banking. Some financial data must be secure within a financial institution and only shared with a client. Other data, in the form of transactions must be shared between and among banks and other financial institutions.

The USDN security meta-data would determine which data could be sent to another financial organization, what data can be sent, and other characteristics of transaction. It would be within the USDN and not across any portion of the Internet. It can then be retrieved by the destination organization.

For example, if all defense contractors were on the USDN then when teams formed to respond to a DoD Request For Proposal (RFP), the various teams of contractors and subcontractors could share requirements and other data within their team. When the DoD chose the winning team, program/project, risk, and design data could be shared and shared with the customer without fear a cyber attack on one of the sub-contractors leading to the capture or corruption of the program or mission critical data. [Sidebar: frequently a third or fourth tier sub-contractor has more vulnerabilities than the prime contractor.]

Issues

Again,”The first instance of a superior principle is always inferior to a mature example of an inferior principle.”

There are three issues with the creation of such a system.

The first is cost; creating an entire nationwide or worldwide network is very expensive in the startup phase. Creating (or really resurrecting in many cases) software to support the functions of the USDN will be very expensive. There is the cost of implementing software services to interface with existing organizational applications. Acquiring the physical cabling for the system will be expensive.

Modifying routers to use the new protocols will be expensive. Designing, constructing, and testing the new portcullis-bridge will be very expensive. Most of this investment will need to be done before one data element is protected.

The cost is more than a straight financial issue of building the system. It will threaten much of the multi-billion dollar cyber security industry’s income stream. This industry will market and lobby against building out the system.

The second issue may be used by that industry as an argument against the USDN. The issue that the system only protects data and not other types of information like e-mail and documents. This is true. However, the core of any organization is its data. Documents can be easily constructed from data, but not the other was around.

The third issue, at least initially, is the response time of the system. Currently applications and users have come to expect nanosecond response times to dynamic requests. Initially, at least, I predict that the response time to requests will be in terms of seconds; maybe many. I saw this with Microsoft DOS—until version 3.1 it was bad—other products from Microsoft, Apple, and Oracle [Sidebar: I worked with Oracle 4.1] and many other hardware and software products.] So it will be a rocky start, but ultimately it will cost much less than the recover, rebuild, patch, upgrade, and get hacked again systems of today.

Summary

While the USDN does not protect an organization from cyber attacks, it does make an organization’s mission critical data nearly invulnerable an organization will be able to recover from an attack and will make it nearly impossible for terrorists, cyber criminals, etc. to get a personal data or its mission critical data protected.

For anyone who is interested, please comment on this post. I have much of knowledge of the processes, technology, and construction process involved than I can put in a post, but would be happy to discuss it.