From Applied Enterprise Architecture

You’ve made the big data investment. You believe Nucleus Research when it says that an investment in analytics return a whopping thirteen (13) dollars for every one (1) dollar spent. Now it’s time to realize value. This series of posts is going to provide a detailed set of steps you can take to unlock this value in a number of ways. As a simple use case I’m going to address the perplexing management challenge of platform and tool optimization across the analytic community as an example to illustrate each step. This post addresses the first of nine practical steps to take. Although lengthy, please stick with me, I think this you find this valuable. I’m going to use a proven approach for solving platform and tool optimization in the same manner that proven practice suggests every analytic decision be made. In this case I will leverage the CRISP-DM method (there are others I have used like SEMMA from SAS) to put business understanding front and center at the beginning of this example.

You’ve made the big data investment. You believe Nucleus Research when it says that an investment in analytics return a whopping thirteen (13) dollars for every one (1) dollar spent. Now it’s time to realize value. This series of posts is going to provide a detailed set of steps you can take to unlock this value in a number of ways. As a simple use case I’m going to address the perplexing management challenge of platform and tool optimization across the analytic community as an example to illustrate each step. This post addresses the first of nine practical steps to take. Although lengthy, please stick with me, I think this you find this valuable. I’m going to use a proven approach for solving platform and tool optimization in the same manner that proven practice suggests every analytic decision be made. In this case I will leverage the CRISP-DM method (there are others I have used like SEMMA from SAS) to put business understanding front and center at the beginning of this example.

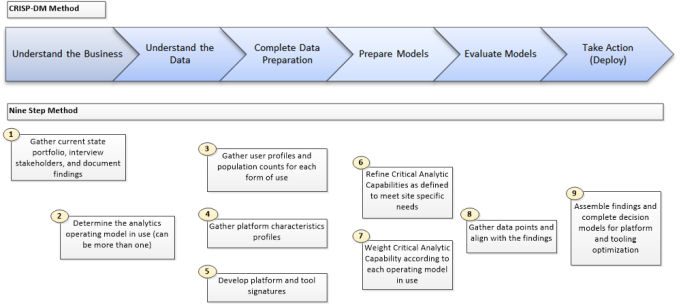

Yes, I will be eating my own dog food now (this why a cute puppy is included in a technical post and not the Hadoop elephant) and getting a real taste of what proven practice should like across the analytic community. Recall the nine steps to take summarized in a prior post.

1) Gather current state analytics portfolio, interview stakeholders, and compile findings.

2) Determine the analytics operating models in use.

3) Refine Critical Analytic Capabilities as defined to meet site specific needs.

4) Weight Critical Analytic Capability according to each operating model in use.

5) Gather user profiles and simple population counts for each form of use.

6) Gather platform characteristics profiles.

7) Develop platform and tool signatures.

8) Gather data points and align with the findings.

9) Assemble findings and prepare a decision model for platform and tooling optimization.

Using the CRISP-DM method as a guideline, we find that each of the nine steps corresponds to the CRISP-DM method as illustrated in the following diagram.

Note there is some overlap between understanding the business and the data. The models we will be preparing will use a combination of working papers, logical models, databases, and the Decision Model Notation (DMN) from the OMG to wrap everything together. In this example the output product is less about deploying or embedding an analytic decision and more about taking action based on the results of this work.

Step One – Gather Current State Portfolio

In this first step we are going to gather a deep understanding for what exists already within the enterprise and learn how the work effort is organized. Each examination should include at a minimum:

- Organization (including its’ primary and supporting processes)

- Significant Data Sources

- Analytic Environments

- Analytic Tools

- Underlying technologies in use

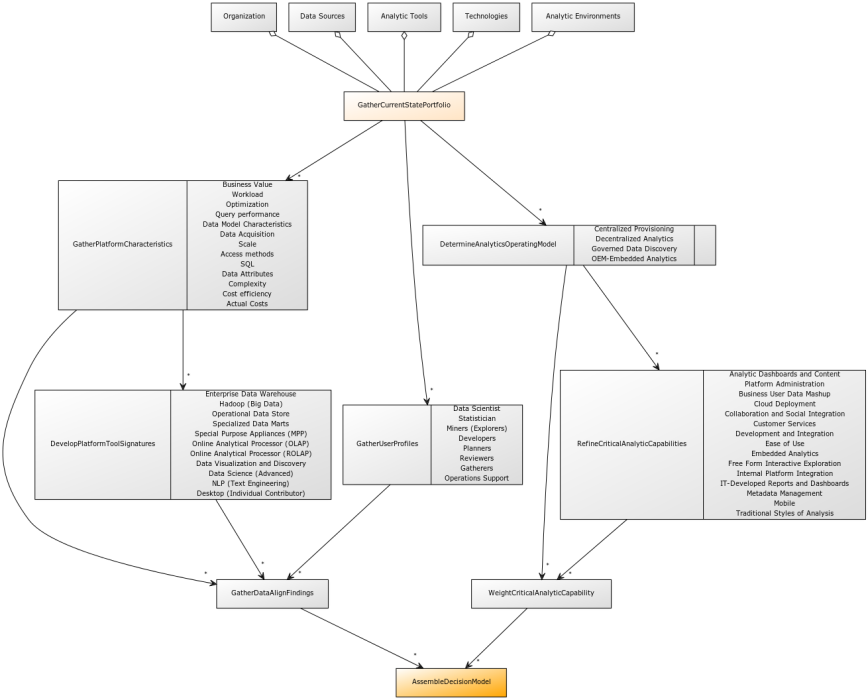

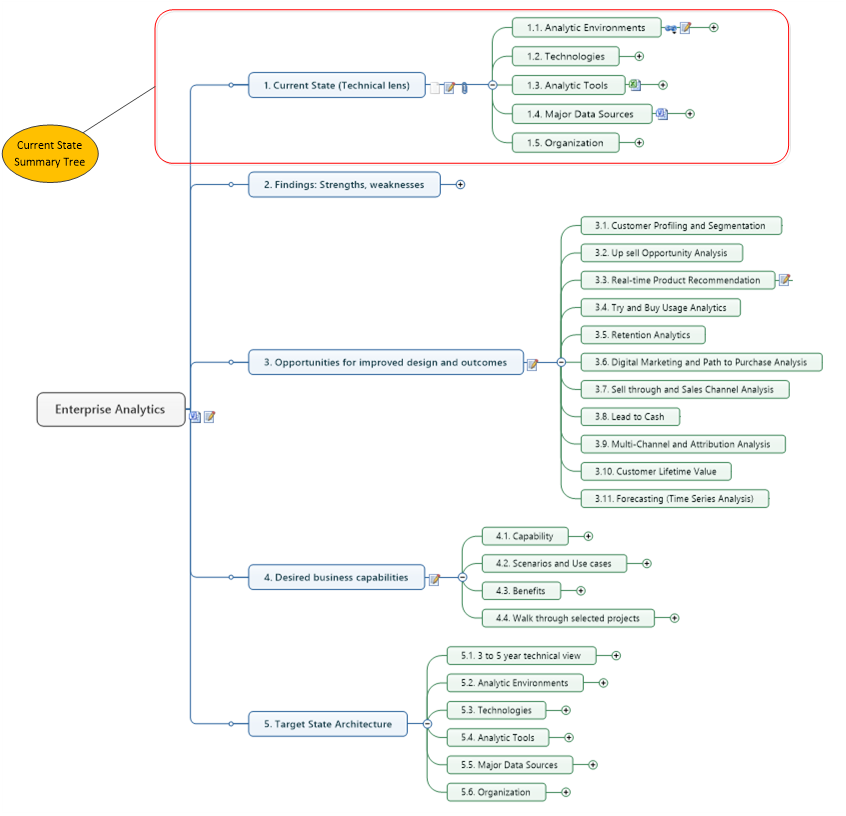

The goal is to gather the current state analytics portfolio, interview stakeholders, and document our findings. In brief, this will become an integral part of the working papers we can build on in the steps to follow. This is an important piece of the puzzle we are solving for. Do not even think about proceeding until this is complete. Note the following diagram (click to enlarge) illustrates the dependencies between accomplishing this field work and each component of the solution.

Organization

If form follows function, this is where we begin to uncover the underlying analytic processes and how the business is organized. Understanding the business by evaluating the organization will provide invaluable clues to uncover what operating models are in use. For example, if there is a business unit organized outside of IT and reporting to the business stakeholder, you will most likely have a decentralized analytics model in addition to the centralized provisioning most analytic communities already have in place.

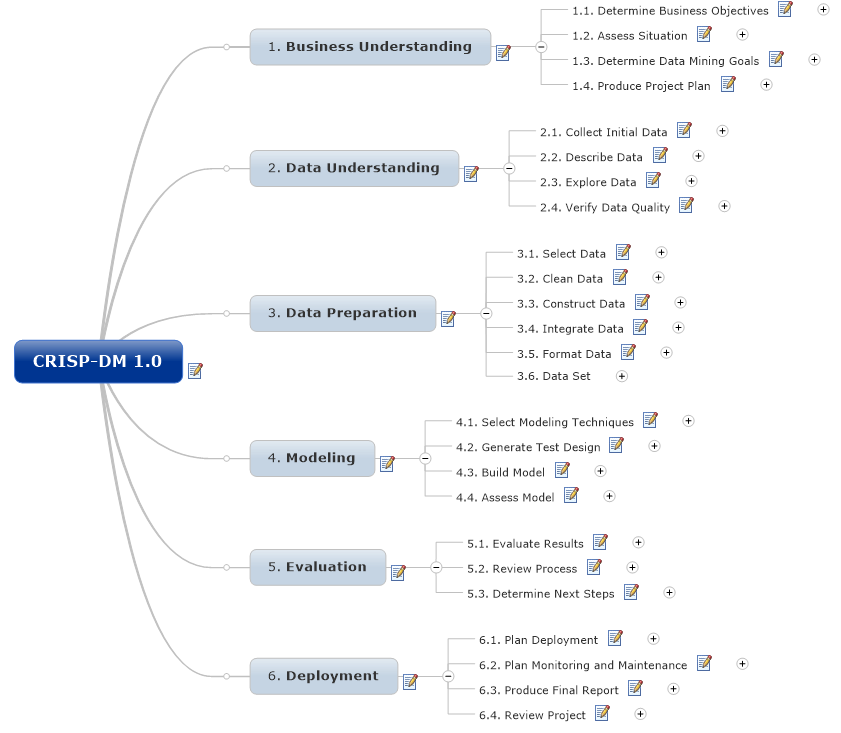

Start with the organization charts; but do not stop there. Recommend you get a little closer to reality in the interview process to really understanding what is occurring in the community. By examining the underlying processes this will become clear. For example, what is the analytic community really doing? Do they use a standard method (CRISP-DM) or something else? An effective way to uncover this beyond the simple organization charts (which are never up-to-date and notorious for mislabeling what people are actually doing) is using a generally accepted model (like CRISP-DM) to organize the stakeholder interviews. This means we can truly understand what is typically performed by whom, using what processes to accomplish their work. And where boundary conditions exist or in the worst case are un-defined. An example is in order. Using the CRISP-DM model we see there are a couple of clear activities that typically occur across all analytic communities. This set of processes is summarized in the following diagram (click to enlarge).

Gathering the analytic inventory and organizing the interviews now becomes an exercise in knowing what to look for using this process model. For example, diving a little deeper we can now explore how modeling is performed during our interviews guided by a generally accepted method. We can structure questions around the how, who, and what is performed for each expected process or supporting activity. Following up on this line of questioning should normally lead to samples of the significant assets which are collected and managed within an analytic inventory. Let’s just start with the modeling effort and a few directed questions.

- Which organization is responsible for the design, development, testing, and deployment of the models?

- How do you select which modeling techniques to use? Where are the assumptions used captured?

- How do you build the models?

- Where do I find the following information about each model?

- Parameter, Variable Pooling Settings

- Model Descriptions

- Objectives

- Authoritative Knowledge Sources Used

- Business rules

- Anticipated processes used

- Expected Events

- Information Sources

- Data sets used

- Description of any Implementation Components needed

- A Summary of Organizations Impacted

- Description of any Analytic Insight and Effort needed

- Are anticipated reporting requirements identified?

- How is model testing designed and performed?

- Is a regular assessment of the model performed to recognize decay?

When you hear the uncomfortable silence and eyes point to the floor you have just uncovered one meaningful challenge. Most organizations I have consulted into DO NOT have an analytic inventory, much less a metadata repository (or even a simple information catalog) I would expect to support a consistent, repeatable process. This is a key finding for another kind of work effort that is outside the scope of this discussion. All we are doing here is trying to understand what is being used to produce and deploy information products within the analytic community. And is form really following function as the organization charts have tried to depict? Really?

An important note: we are not in a process improvement effort; not just yet. Our objective is focused on platform and tool optimization across the analytic community. Believing form really does follow function it should be clear after this step what platforms and tools are enabling (or inhibiting) effective response and solving for this important and urgent problem across the organization.

Significant Data Sources

The next activity in this step is to also gain a deeper understanding what data is needed to meet the new demands and business opportunities made possible with big data. Let’s begin with understanding how the raw materials or data stores can be categorized. Data may be sourced from any number of sources to include one or more of the following:

- Structured data (from tables, records)

- Demographic data

- Times series data

- Web log data

- Geospatial data

- Clickstream data from websites

- Real-time event data

- Internal text data (i.e. from e-mails, call center notes, claims, etc.)

- External social media text data

If you are lucky there will be an enterprise data model or someone in enterprise architecture who can point to the major data sources and where the system of record resides. These are most likely organized by subject area (Customer, Account, Location, etc.) and almost always include schema-on-write structures. Although the focus is big data, it still is important to recognize that vast majority of data collected originates in transactional systems (e.g. Point of Sale). Look for curated data sets and information catalogs (better yet an up-to-date metadata repository like Adaptive or Alation) to accelerate this task if present.

Data in and of itself is not very useful until it is converted or processed into useful information. So here is a useful way to think about how this is viewed or characterized in general. The flow of information across applications and the analytic community from sources external to the organization can take on many forms. Major data sources can be grouped into three (3) major categories:

- Structured Information,

- Semi-Structured Information and

- Unstructured Information.

While modelling techniques for structured information have been around for some time, semi-structured and unstructured information formats are growing in importance. Unstructured data presents a more challenging effort. Many believe up to 80% of the information in a typical organization is unstructured this must be an important area for focus as part of an overall information management strategy. It is an area, however, where the accepted best practices are not nearly as well-defined. Data standards provide an important mechanism for structuring information. Controlled vocabularies are also helpful (if available) to focus on the use of standards to reduce complexity and improve reusability. When we get to modeling platform characteristics and signatures in the later steps the output of this work will become increasingly valuable.

Analytic Landscape

I have grouped the analytic environments, tools, and underlying technologies together in this step because they are usually the easiest data points to gather and compile.

- Environments

Environments are usually described as platforms and can take several different forms. For example, you can group these according to intended use as follows:

– Enterprise Data Warehouse

– Specialized Data Marts

– Hadoop (Big Data)

– Operational Data Stores

– Special Purpose Appliances (MPP)

– Online Analytical Processor (OLAP)

– Data Visualization and Discovery

– Data Science (Advanced Platforms such as the SAS Data Grid)

– NLP and Text Engineering

– Desktop (Individual Contributor; yes think how pervasive Excel and Access are) - Analytic Tools

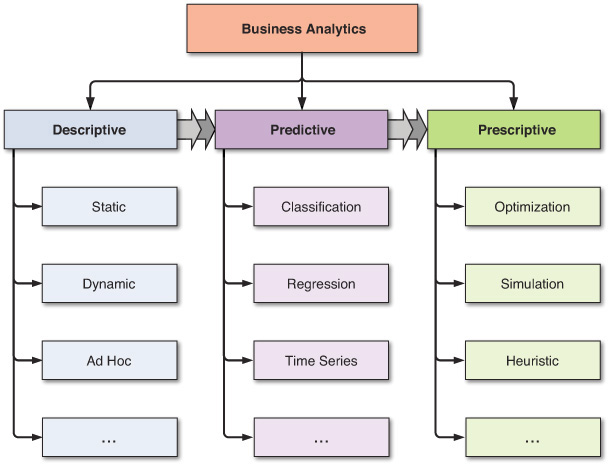

Gathering and compiling tools is a little more interesting. There is such a wide variety of tools designed to meet several different needs, and significant overlap in functions delivered exists among them. One way to approach this is group by intended use. Try using the INFORMS taxonomy for example to group the analytic tools you find. There work identified three hierarchical but sometimes overlapping groupings for analytics categories: descriptive, predictive, and prescriptive analytics. These three groups are hierarchical and can be viewed in terms of the level of analytics maturity of the organization. Recognize there are three types of data analysis:- Descriptive (some have split Diagnostic into it’s own category)

- Predictive (forecasting)

- Prescriptive (optimization and simulation)

This simple classification scheme can be extended to include lower level nodes and improved granularity if needed. The following diagram illustrates a graphical depiction of the simple taxonomy developed by INFORMS and widely adopted by most industry leaders as well as academic institutions.

Even though these three groupings of analytics are hierarchical in complexity and sophistication, moving from one to another is not clearly separable. That is, the analytics community may be using tools to support descriptive analytics (e.g. dashboards, standard reporting) while at the same time using other tools for predictive and even prescriptive analytics capability in a somewhat piecemeal fashion. And don’t forget to include the supporting tools which may include metadata functions, modeling notation, and collaborative workspaces for use within the analytic community.

- Underlying technologies in use

Technologies in use can be described and grouped as follows (and this just a simple example and is not intended to be an exhaustive compilation).- Relational Databases

- MPP Databases

- NOSQL databases

- Key-value stores

- Document store

- Graph

- Object database

- Tabular

- Tuple store, Triple/quad store (RDF) database

- Multi-Value

- Multi-model database

- Semi and Unstructured Data Handlers

- ETL or ELT Tools

- Data Synchronization

- Data Integration – Access and Delivery

Putting It All Together

Not that we have compiled the important information needed, where do we put this for the later stages of the work effort? In an organization of any size this can be quite a challenge, just due to the sheer size and number of critical facets we will need later, the number of data points, and the need to re-purpose and leverage this in a number of views and perspectives.

Here is what has worked for me. First use a mind or concept map (Mind Jet for example) to organize and store URIs to the underlying assets. Structure, flexibility, and the ability to export and consume data from a wide variety of sources is a real plus. The following diagram illustrates an example template I use to organize an effort like this. Note the icons (notepad, paperclip, and MS-Office) even at this high level point to a wide variety of content gathered and compiled in the fieldwork (including interview notes and observations).

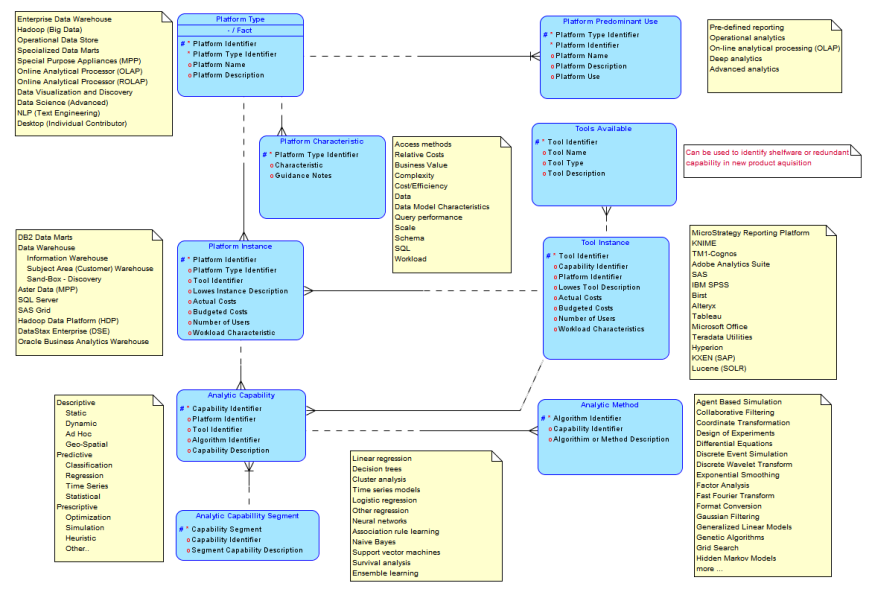

For larger organizations without an existing Project Portfolio Management (PPM) tool or metadata repository that supports customizations (extensions, flexible data structures) it is sometimes best to augment the maps with a logical and physical database populated with the values already collected and organized in specific nodes of the map. A partial fragment of a logical model would look something like this, where some sample values are captured in the yellow notes.

Armed with the current state analytics landscape (processes and portfolio), stakeholder’s contributions, and the findings compiled we are now ready to move on to the real work at hand. In step (2) we will use this information to determine the analytics operating models in use supported by the facts.

If you enjoyed this post, please share with anyone who may benefit from reading it. And don’t forget to click the follow button to be sure you don’t miss future posts. Planning on compiling all the materials and tools used in this series in one place, still unsure of what form and content would be the best for your professional use. Please take a few minutes and let me know what form and format you would find most valuable.

Suggested content for premium subscribers: Big Data Analytics - Unlock Breakthrough Results:(Step 1) CRISP-DM Mind Map (for use with Mind Jet, see https://www.mindjet.com/ for more) UML for dependency diagrams. Use with yUML (see http://yuml.me/) Enterprise Analytics Mind Map (for use with Mind Jet) Logical Data Model (DDL; use with your favorite tool) Analytics Taxonomy, Glossary (MS-Office) Reference Library with Supporting Documents

Tagged: Analytics, Big Data, Big Data Tools, Enterprise Architecture, Information Architecture, Methodology, Operating Model, Proven Practice, Roadmap development

![]()